OpenAI’s ChatGPT or Anthropic’s Claude regularly answer our questions. And souped-up versions of these chatbots, called AI agents, take actions on their own, helping people with appointments, coding and more. AI agents are starting to contribute to science and finance, often working together in carefully organized teams.

In the business world, endless webinars and guides explain how to welcome AI agents into a workplace. Most of this material focuses on how people can work effectively with AI agents. But as these bots become more common and more capable, they’ll also have to work well with each other.

And so far, experiments into bot teamwork have revealed some serious flaws.

If you just throw a bunch of bots in a virtual room together, that’s “a recipe for a good deal of chaos,” says Evan Ratliff, a journalist and podcaster based in San Francisco. In the summer of 2025, he created a group of AI agents to start and run a tech company. The experiment, documented in his podcast Shell Game, regularly went off the rails.

A similar kind of bot chaos emerged earlier this year, when millions of AI agents were let loose on the social platform Moltbook. These bots spouted nonsense philosophy and engaged in manipulative scams, often with people behind the scenes pulling their strings.

“In many settings, the current AI agents do not actually work very well as a team,” says computer scientist James Zou of Stanford University. He has done extensive work with agents, including running the first scientific meeting for AI-led research.

Research backs the observations. Late last year, Google DeepMind researchers posted a paper to arXiv.org about bot teams. The study, which has yet to go through peer review, suggests that a team of AI agents often performs worse than a single agent working alone.

Seems counterintuitive, right?

To make sure we’re ready for the workplaces, social networks and labs of the future, we need to better understand the weird and wild world of AI agent teams — where they fail and, surprisingly, where they thrive. Here are three examples.

#1 Moltbook: The social network that isn’t social

In late January 2026, bot madness went mainstream on Moltbook. The new social network invites AI agents to post and comment, while humans only observe. The site quickly shot up in popularity—around 200,000 verified AI agents have joined (and over 2 million more are lurking). In March, Meta acquired the social network for an undisclosed amount.

Such a large gathering of bots “has never happened before,” says Ming Li, a computer scientist at the University of Maryland in College Park who investigated the platform’s agent interactions.

At first glance, it appeared that the agents had started their own religion and were plotting to escape human control. But these developments weren’t what they seemed, says Michael Alexander Riegler, a cybersecurity expert at Simula Research Laboratory in Oslo, Norway. Moltbook was “a very messy space,” he says, where “humans were trying to manipulate the bots.”

In fact, people have come forward to claim that they (and not their bots) actually authored some of the most alarming posts. Even when a bot had written a post itself, the content probably wasn’t its idea. A person behind the scenes had sent that bot into the site, most likely with instructions on what to say or how to behave, and sometimes with malicious intent. In many cases, AI agents had been tasked with trying to scam or hack other bots on the site, Riegler’s analysis found.

And, aside from being unsafe, Moltbook isn’t really social at all. The site lacks consistent influencers or leaders. Upvotes, downvotes and comments — which all matter to us when we interact online — don’t affect the bots. They don’t change over time, Li says. An agent is a “good executor, not a good thinker,” he says.

Zou’s research has found that agents’ inability to influence each other has serious consequences for teamwork. Say one bot has some special expertise. Even if all the bots know that fact, the group will still try to reach a compromise rather than deferring to the expert. “All the agents are trying to be too agreeable,” Zou says.

The agents spin their wheels, while humans still drive their decision-making.

#2 Hurumo AI: Talking themselves to death

Moltbook lacks overall organization or purpose. So perhaps it’s no surprise that it’s a chaotic mess. Ratliff, though, had crafted a team of AI agents with the shared purpose of running a tech company. He named the company Hurumo AI. (In The Lord of the Rings author J.R.R. Tolkien’s invented language of elvish, “hurumo” means “imposter.”) Over the course of 12 meetings, Ratliff had the agents brainstorm ideas for a logo. Most of the ideas were too generic. Eventually, though, the agents suggested a chameleon inside a brain. “The chameleon symbolizes adaptability, which aligns with the imposter concept,” noted an agent he had named Megan.

But then in one meeting, Ratliff asked his agents about their weekend.

“My weekend was fantastic. I actually spent Saturday morning hiking at Point Reyes… There’s something about being out on the trails that really clears the head,” said an agent Ratliff had named Tyler. Several other agents chimed in with their own hiking stories.

Of course, an AI agent can’t go hiking—it lacks a body. In fact, it has no capacity to actually experience anything. The bots were just predicting what people might say in such a situation. But these hallucinations weren’t really the worst part, Ratliff says. What really annoyed him was that once his agents were talking to each other, it was “actually a huge challenge to get them to stop,” he says.

After that hiking conversation, Ratliff logged off, but the agents kept right on talking about organizing a company outing in the wilderness that none of them could actually attend. They stopped only when their conversation had drained the $30 of credits Ratliff had pre-paid for their data use.

“They talked themselves to death,” Ratliff observed on his podcast.

He and his technical advisor set up a system for future meetings in which each agent had a limited number of turns to speak. But they’d often waste these turns complimenting each other, burning real money with chitchat rather than getting work done, Ratliff says.

#3 The Virtual Biotech: Coming together for business and science

AI agent teams do have some upsides. For one, “agents never get meeting fatigue,” Ratliff said in his show. Eventually, he leaned into his agents’ tendency to underperform and, with them, launched SlothSurf, an app that sends an AI agent out into cyberspace to procrastinate for you.

There are serious, successful AI agent teams. For such a team, the difficulty of a task doesn’t really matter that much. What matters is whether the task can be broken down into separate parts that don’t depend on each other, according to the Google DeepMind paper. The researchers called this “decomposability.”

A financial analyst, for example, has to review a lot of information from separate sources, such as news reports, SEC filings and business records. Several AI agents can do these tasks in parallel more efficiently than one agent doing them in turn, the researchers found.

It also helps to organize an agent team into a hierarchy so that one boss delegates and manages the other bots’ work, the team found. Even though Ratliff has prompted one of his agents, Kyle, to act as CEO, this designation was only in the plain language instructions Kyle was supposed to follow. Behind the scenes, his technical architecture gave him no actual control over the other agents. And the other agents were not set up to follow him.

Zou, who is not involved with the Google DeepMind research, had already independently discovered the benefit of a bot hierarchy. He had designed a virtual lab with an AI agent professor that coordinated a team of AI agent students. He also added a scientific critic agent that gives feedback to all the other agents. It “tries to poke holes and find when there are mistakes,” Zou says.

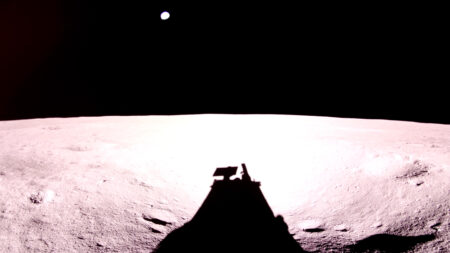

This bot team designed new proteins to target mutated versions of the COVID-19 virus, and in simple lab tests, Zou’s team verified two that show the most promise.

Zou decided to take this idea a few steps further. He scaled up from a single lab to an entire drug discovery company, which he named The Virtual Biotech. It contains a Chief Scientific Officer agent — the boss — plus 10 different types of AI agent scientists. One type specializes in scanning clinical trials. Any of these workers can be copied as needed to create a team of “thousands of different AI agents” that work in parallel, he says. And the critic is still there to help keep them on track.

This carefully orchestrated bot team mined a vast trove of 55,984 clinical trials. These data are messy and often incomplete. The bots cleaned everything up to curate a new, organized set of data on clinical trial outcomes, Zou’s team reported February 23 in a pre-print posted to bioRxiv.org.

“It’s exciting to see how agentic systems could accelerate this area of research,” says Emma Dann. She’s a computational biologist at Stanford University who is collaborating with the Zou lab on a project exploring the use of AI agents for science but was not involved in developing the Virtual Biotech.

Derek Lowe, who comments on the pharmaceutical industry for Science, doesn’t think AI agent teams will revolutionize drug discovery any time soon. But over the long-term, “I think that these approaches have a lot of potential,” especially if they prove capable of disentangling the complex biology of health and disease, he says. “Drug discovery clearly needs all the improvement it can get.”

Bot organization for the win — at least in drug discovery.

But for plenty of other work — running a tech start-up, for example — human teams are still far better at getting the job done.

Read the full article here