Mathematician Kevin Buzzard of Imperial College London is training computers how to prove one of the most famous problems in math history: Fermat’s last theorem.

Resolving the problem isn’t the point. There’s already an accepted proof that was finalized in 1998. That work is a tortuous maze of mathematics that fills about 130 pages over two papers. It spans mathematical fields and unites abstract ideas that previously seemed to have little to say to one another. To know the proof is to know a wide swath of mathematics. In the future, Buzzard says, a computer program that can verify something so sprawling will be able to help mathematicians find, scrutinize and solve a wide range of problems.

For years, Buzzard and a handful of mathematicians have been working on projects like this to formalize mathematics. Historically, formalization has involved expressing mathematical ideas as precisely as possible, erasing all ambiguity. Today, that means translating definitions and theorems into computer code so that a specialized program can verify every painstaking step.

Formalization “is a new paradigm for mathematical proof writing that essentially demands the proof writer be way more rigorous than usual,” says mathematician Emily Riehl of Johns Hopkins University. “The computer is not really filling in the details.” The person who is writing the proof has to do that instead.

But formalizing the proof of Fermat’s last theorem is just the cornerstone of an even larger vision: to build a digital library of all of mathematics that will enable computers to be useful assistants to mathematicians.

Even now, most mathematicians write proofs that rely on spoken or written descriptions and intuition, traditional tools that until recently seemed out of the reach of computers. As such, modern formalization has long been a niche effort because it requires expressing mathematical ideas as code.

Now, the explosion in artificial intelligence has propelled efforts, spearheaded by technology companies, to combine large language models with theorem provers to develop systems capable of autoformalization. In theory, such systems may ultimately be able to do things that humans can’t.

That’s a divisive goal, and one that troubles many mathematicians for how it could reshape mathematical research and progress. What began as a philosophical question — What is the maximum precision possible in a mathematical proof? — has now become an existential one: Will the quest for precision upend the field?

“We’re really at the cusp of a change,” says Patrick Shafto, a mathematician and computer scientist at Rutgers University in Newark, N.J., and at DARPA, a research and development agency within the U.S. Department of Defense.

“Mathematics is now basically practiced at a board, as it was 100 years ago. But I think in five years, it is very likely that every single young mathematician uses AI,” Shafto says. “Advances in AI and formalization have the possibility of really highlighting the interesting aspects of being human and our quest for knowledge, as humans.”

My robot assistant

AI may have acted like an accelerant thrown on the fires of formalization, but the idea of using a machine for mathematical proofs isn’t new. In 1956, researchers at the RAND corporation introduced a computer program (they called it a “logic theory machine”) that checked proofs published in Principia Mathematica, a landmark series of books by mathematicians Bertrand Russell and Alfred North Whitehead.

“I am delighted to know that Principia Mathematica can now be done by machinery,” Russell wrote in a letter to Herbert Simon, one of the researchers behind the thinking machine. “I wish Whitehead and I had known of this possibility before we both wasted 10 years doing it by hand.”

Even though the practice is not widespread, some mathematicians have used computer programs called interactive theorem provers in the last few decades to verify existing mathematical proofs. In 1998, mathematician Thomas Hales announced that he and his student Samuel Ferguson had used a computer to prove the Kepler conjecture, a statement about the optimal way to stack spheres that was originally posed by Johannes Kepler in the 17th century.

The proof met some resistance from other mathematicians, who argued that because the computer had churned through so many enormous, complicated calculations representing all possible configurations of stacked spheres, humans couldn’t check the accuracy of the answers, and therefore couldn’t verify the reasoning. So from 2003 to 2014, Hales used digital assistants to formalize and verify his own proof.

In February, by combining AI with an interactive theorem prover, Ukrainian mathematician Maryna Viazovska and others finished formalizing proofs of the Kepler conjecture in eight and 24 dimensions — digital versions of work that had earned Viazovska a Fields Medal in 2022.

Buzzard’s journey with formalization began in 2017 with a kind of mathematical midlife crisis. He had just reviewed a paper for publication in a math journal and, after a lengthy exchange with the paper’s author, couldn’t determine whether the argument was rigorous.

That frustration led him to think broadly about the state of mathematics — and what he thought it could be. “And I got quite unhappy with the state of things,” he said during a talk in September. He began wondering: Could technology take the guesswork out of verifying math? After all, mathematicians don’t get into the field because they want to check beneath the hood of other proofs; they want to do something new. If verification could be offloaded to a machine, why not?

Buzzard began learning how to use Lean, which is both a programming language and an interactive theorem prover. Lean first appeared in 2013, the brainchild of Leo de Moura, a computer scientist at Microsoft, who designed it as a way to verify mathematical arguments, especially in computer code. Lean is the same theorem prover used to formalize Viazovska’s proof in February.

The more Buzzard learned, the more excited he got. He began to see formalization as the act of digitizing mathematics, which in turn would modernize the way that mathematicians use machines. He likens it to the digitalization of music. When music companies began selling CDs, Buzzard says, he at first dismissed the technology as a way to force listeners to re-buy music they already owned. Then he realized that CDs allowed people to access, share and interact with music in ways previously inconceivable, a change amplified by the advent of streaming services.

“Digitizing music has completely turned the world of music on its head,” Buzzard says. “If we digitize mathematics, maybe at some point it will turn math on its head.” He looked back at his own education, and how he taught math, and realized people had been learning the subject in the same way for the last century. It was time to modernize.

And Buzzard decided to start with a centuries-old equation that was, until recently, the most famous unsolved problem in math.

A big mystery in a tiny margin

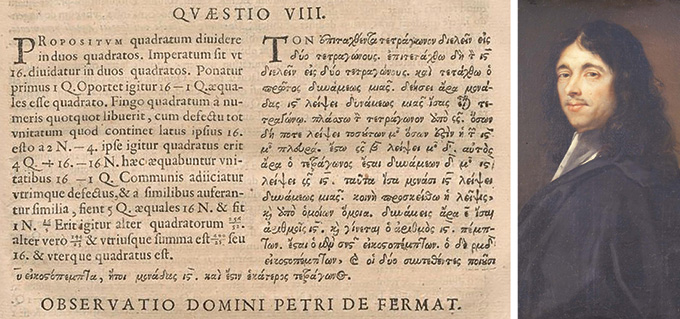

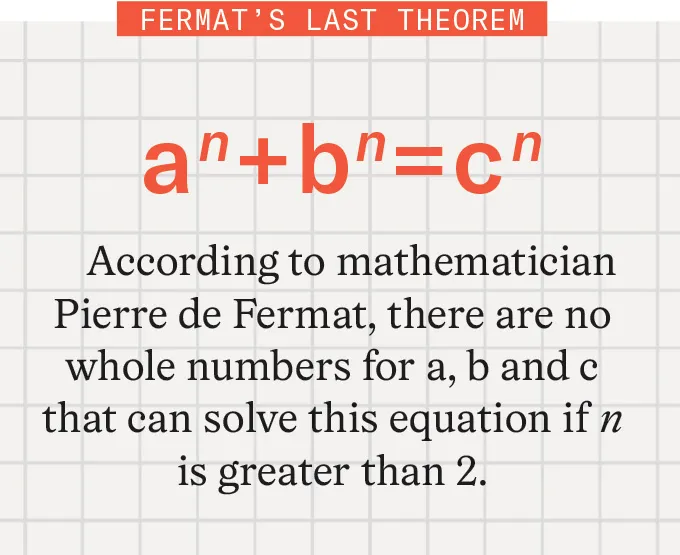

According to legend, in or around 1637, French mathematician Pierre de Fermat scribbled a problem and a note in a copy of Arithmetica, a book by third-century Greek mathematician Diophantus. The problem involves this equation: an + bn = cn. If n = 2, then we know there are infinitely many solutions. That’s because in that case, the equation becomes the Pythagorean theorem and a, b and c correspond to the side lengths of right triangles.

Fermat stated that there are no whole numbers for a, b and c that can solve this equation if n is greater than 2. Next to the problem, Fermat wrote in Latin: “I have a truly marvelous demonstration of this proposition that this margin is too narrow to contain.”

Fermat’s son discovered the book and the note, but not until after his father’s death. The theorem was easy to state and hard to prove, and Fermat’s missing proof vexed mathematicians for centuries. No one ever found his “truly marvelous” argument, and no mathematician ever conjured a proof that might remotely fit that description. Some question whether it ever existed, or conjecture that whatever proof Fermat had in mind was fatally flawed. It’s tempting to view Fermat’s statement as a practical joke with extraordinarily long legs.

British mathematician Andrew Wiles finally cracked it in the late 20th century and later collaborated with mathematician Richard Taylor to finalize it. Their proof used arcane, far-reaching mathematical concepts that weren’t around in the 17th century, ideas that bridge mathematical fields that once seemed unconnected.

Over centuries, by probing Fermat’s simple problem mathematicians have made enormous breakthroughs in many fields beyond number theory, the field most closely associated with the original problem. In one of the most significant, German mathematician Ernst Kummer proved in 1847 that the theorem held for the regular primes — a subset of prime numbers. He did so by developing ideas that laid the groundwork for a new field called algebraic number theory.

In 2023, with support from the U.K.’s Engineering and Physical Sciences Research Council, Buzzard launched his formalization project with Fermat’s last theorem partly because of the proof’s size and importance, and partly because many of his colleagues at Imperial College London are exploring ideas used in the proof. He knew it would be a Herculean, messy task to encode every definition and lemma — akin to a mini-theorem embedded in a larger proof — that plays some role in the overall scheme. And it’s been a rocky road. “I’m sort of all over the place, and I’ve had some failed starts,” he says.

He’s not toiling alone. At first, Buzzard says, about 30 people were contributing to his formalization effort by writing code for Lean, all of them familiar names and faces. Many more have reached out with ideas or otherwise tried to join the effort, he says, and just over 60 have had their coded contributions verified and accepted. Still, the project has grown into an interdisciplinary collaboration on a scale that Buzzard couldn’t have imagined. Anonymous number theorists are reaching out with ideas, he says. Last August, he says, he went camping at a music festival for a week and returned to find 7,000 unread messages about various aspects of the proof.

In January, the effort reached one of its first major milestones. “We proved that a certain thing was finite,” paving the way for the next step, Buzzard says. The effort required for that milestone, however, has led him to doubt whether they’ll finish in his targeted timeline of five years.

One of the largest challenges, Buzzard says, is figuring out how to quickly build Lean’s library of mathematical knowledge. This is a bottleneck for AI applications in math, too. “In this whole area of AI for math is that there’s a terrible lack of interesting datasets,” he says.

In a separate project funded by Renaissance Philanthropy, Buzzard and Rutgers mathematician Alex Kontorovich are further contributing to Lean’s library — and expanding its applicability — by formalizing problems from a list of recent, particularly thorny theorems representing the cutting edge of mathematics in the 21st century.

The implications reach far beyond Buzzard’s projects. An expanding volume of mathematical knowledge could enable working mathematicians — if they were so inclined — to find fault lines in new proofs, or determine whether certain conjectures could hold up. Referees and editors who review papers for journals would be free to focus on the big ideas behind submitted papers rather than the excruciatingly fine details of the logic behind the proof.

“That’s game changing,” Riehl says. “Proofs are hard, and the papers are already very long.” Errors can slip through.

A theorem prover with access to a robust library of mathematical knowledge could be used to identify hallucinations and other mistakes in mathematical proofs generated by AI programs. Having a proof be 95 percent correct, after all, may mean the proof isn’t correct at all. “One hallucination can break an entire mathematical argument because that’s the nature of mathematics,” Buzzard says.

For that reason, tech companies have been developing programs that combine AI tools like Google’s Gemini or OpenAI’s ChatGPT with the fact-checking rigor of Lean. So has the U.S. government: In early 2025, DARPA launched a program called Exponentiating Mathematics, or expMath, with the goal of using AI to accelerate the rate of mathematical discovery, primarily by offloading the finer details of constructing a proof.

All of these efforts tie directly into a more controversial and quickly evolving issue facing mathematics today: figuring out how AI is going to change the field, and whether the AI math invasion is a good thing.

A growing AI specter

The problem with large language models and math, to date, has largely been one of accuracy. To be fair, LLMs like those that power ChatGPT and Anthropic’s Claude are better at math problems than anyone expected, and they have improved with new iterations. But they’re not perfect.

“If you go to ChatGPT and ask it to prove a theorem, it spits out a text,” Riehl says. It might sound good and look good and use correct terms, she says. “But there’s nothing in the way that large language models are designed to guarantee that [it’s] correct.” That’s because they’re designed to respond to queries using probability and are not prioritizing accuracy. And even if it is 99 percent correct, she says, that’s not good enough for a math proof.

When combined with a theorem prover like Lean, though, LLMs get much better.

Last July, the AI company Harmonic made headlines after its program Aristotle, which uses Lean to verify and refine its work, scored high enough for a gold medal, the highest prize, in the annual International Mathematical Olympiad. During this two-day event, participants, all under the age of 20, work through six exceptionally difficult problems. More than 600 human contestants entered the 2025 contest held in Queensland, Australia; 72 scored at least 35 out of a possible 42 points, earning a gold medal. In addition to Aristotle, AI programs used by Google and OpenAI similarly carried out gold medal–level work.

Some mathematicians didn’t see the olympiad accomplishments as showing anything meaningful about the way math is actually done. But more interesting results soon emerged. In July, Rutgers’ Kontorovich and Terence Tao, a UCLA mathematician and Fields Medalist, announced that progress on their 18-month effort to formalize something called the strong prime number theorem had slowed. But then in September, a company called Math, Inc., supported by a grant from the DARPA expMath project, announced that it had used its program, called Gauss, to finish the task in just three weeks.

Gauss combined Lean with AI language models to autoformalize the remainder of the proof — that is, the AI program translated definitions and arguments into Lean, which checked the entire argument for accuracy. More recently, in January, researchers reported using Aristotle and GPT-5.2 to generate, formalize and verify a proof of a problem posed by prolific Hungarian mathematician Paul Erdős in 1975. This is the latest in a recent string of proofs of Erdős problems that used AI in some way.

So far, Buzzard greets advances like these with skepticism. Right now, there are no guardrails, he says. And even though Lean reports that AI-generated code is accurate, it may not actually represent the theorem that the mathematician thought they were proving.

At the same time, Buzzard admits that the picture could change quickly given the rapid speed of AI advancement. So far, he hasn’t seen any AI advances that would help him in his work. But he allows that it’s possible in five years that some tool could emerge that would make short work of formalizing the proof of Fermat’s last theorem. “I do wonder whether autoformalization will get to the point where it will just, you know, be able to eat the literature,” Buzzard says.

Helping humans

Many mathematicians predict that humans will always be necessary in math, but because of the use of AI and formalization, their role could change dramatically.

“The problem-solving aspect of mathematics will basically vanish,” says mathematician and computer scientist Christian Szegedy of Math, Inc. He previously helped develop Google DeepMind’s AlphaProof program and co-led the Elon Musk–founded company xAI. The new job of humans in math, he says, will be “to steer the exploration of mathematics to the areas that we actually care about,” rather than muddling through the logic and fine details of a proof. He sees the rise of AI-driven autoformalization as a way toward creating a digital, brilliant assistant.

Szegedy thinks real progress will be marked by AI’s ability to reason in new and creative ways. He predicts that AI systems will achieve “superhuman intelligence” in math — being able to solve problems that humans can’t — this year. So far, that hasn’t happened.

Szegedy also predicts that at some point, AI models will be better at formalizing proofs than humans, which doesn’t seem out of reach given the fast pace of development in 2025. Soon, he thinks, the models will be able to create a proof from scratch. “And then, the game is over.” He doesn’t think humans will be out of the game; he means that the essential role of the mathematician will be purely creative, relying on an AI collaborator to work out the details.

DARPA’s Shafto, who leads the expMath project, sees the changes as giving mathematicians more time and space to think about ideas rather than details. “If you talk to mathematicians, of course, yes, they prove things and want them to be correct, but that’s not what they’re doing most of the time,” he says. “They’re talking about ideas and how they relate and what might work. Many of them would be happy to have a student or collaborator whom they could trust to sort of prove their tiny lemmas for them.”

Others in the field, though, eye the coming AI wave with skepticism and concern for the future. “Many of my colleagues have absolutely no interest in it,” says mathematician Aravind Asok at the University of Southern California in Los Angeles.

In recent years, Asok says, AI companies have recast mathematical accomplishment as a tool of legitimization. Math itself, he says, becomes a problem to be solved. He finds that notion misguided and “a complete misapprehension of what mathematics is.” The insistences that math can be solved by the abilities of AI models, or that the primary goal is accuracy, require a narrow view of the field.

But it’s a view that has already infiltrated his classroom: Asok says he no longer assigns homework because too many of his graduate students use AI to generate answers. That defeats the purpose. “They need to struggle and engage with [the work] in a way to really build up their own intuitions,” he says. But it’s much faster to ask ChatGPT.

Asok worries that conversations around AI and math focus too closely on correctness. That’s important, he says, “but making mistakes is part of learning.” There have been plenty of mistakes, he adds, that have helped the field of research mathematics move forward.

Formalization is a powerful tool that could help push math in interesting directions, but Asok worries that if students learn math as something to be done with AI, then tomorrow’s mathematicians will lack the creativity needed to find truly new frontiers. “It’s like saying that there’s only one way to have music, or only one way to talk in a conversation,” he says.

Asok also worries that AI may be a threat to the profession because of how progress is perceived. Mathematicians often rely on federal funding, he says, and if the U.S. government adopts the narrative that math itself has been solved by AI companies, support for new work and new ideas could wane. The teaching of math, he says, might be offloaded to AI agents and programs. “I feel like the professional status of mathematicians could change immensely.”

Buzzard maintains that, with or without AI, formalization can help bring math and math education into a modern age. Mathematicians would benefit from an interactive theorem prover with access to verified mathematical information not only to check their work, but also as a proving ground for new AI-generated work, in part to separate sloppy code from bona fide advances.

“I just want to make my colleagues’ lives better,” Buzzard says. “I’m not trying to destroy them. I’m actually trying to help them.”

Read the full article here